Follow Me: real-time in the wild Person Tracking

Application for Autonomous Robotics

Thomas Weber, Sergey Triputen, Michael Danner, Sascha Braun, Kristiaan

Schreve, and Matthias Rätsch

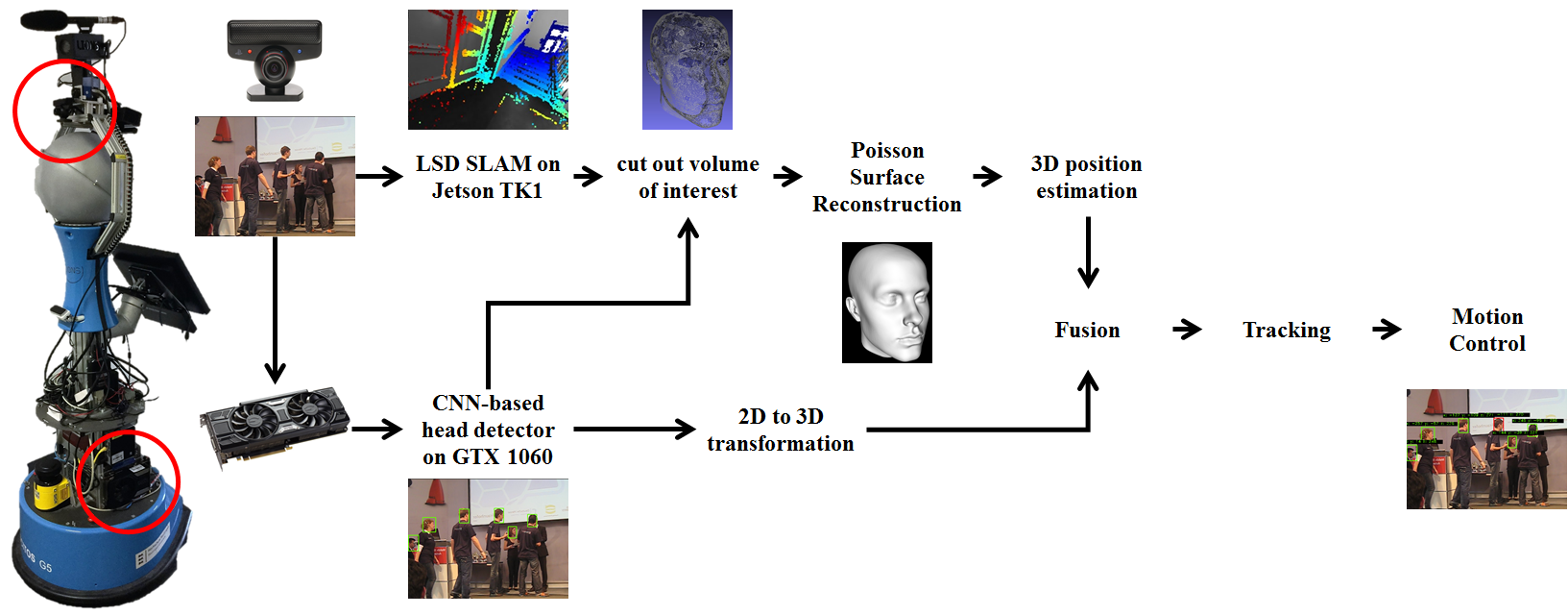

Abstract. In the last 20 years there have been major advances in autonomous

robotics. In IoT (Industry 4.0), mobile robots require more

intuitive interaction possibilities with humans in order to expand its field

of applications. This paper describes a user-friendly setup, which enables

a person to lead the robot in an unknown environment. The environment

has to be perceived by means of sensory input. For realizing a cost and resource

efficient Follow Me application we use a single monocular camera

as low-cost sensor. For efficient scaling of our Simultaneous Localization

and Mapping (SLAM) algorithm, we integrate an inertial measurement

unit (IMU) sensor. With the camera input we detect and track a person.

We propose combining state of the art deep learning with Convolutional

Neural Network (CNN) and SLAM algorithms functionality on the same

input camera image. Based on the output robot navigation is possible.

This work presents the specification, workflow for an efficient development

of the Follow Me application. Our application’s delivered point

clouds are also used for surface construction. For demonstration, we use

our platform SCITOS G5 equipped with the afore mentioned sensors.

Preliminary tests show the system works robustly in the wild.